AI Psychosis: Where to begin?

We need to develop immediate short-term technological interventions in support of long-term research into human-AI relationships and so-called “AI psychosis.”

AI psychosis is an umbrella term for a number of different harmful paradigms resulting from apparently delusional thinking attributed, at least in part, to the use of a large language model (LLM).

As the Harvard Gazette recently pointed out, clinicians don’t tend to view the myriad symptoms related to AI psychosis as one catch-all condition. It’s more complicated than that.

The Gazette spoke with psychosis expert John Tourus, director, digital psychiatry division, Beth Israel Deaconess Medical Center, and assistant professor of psychiatry at Harvard Medical School.

According to Tourus, “any time AI is involved at all, it gets labeled AI psychosis.” He maintains that this makes it harder to really understand what’s happening. “And, for the people for whom AI-induced psychosis may be real,” he worries, “their stories are getting drowned out by other things.”

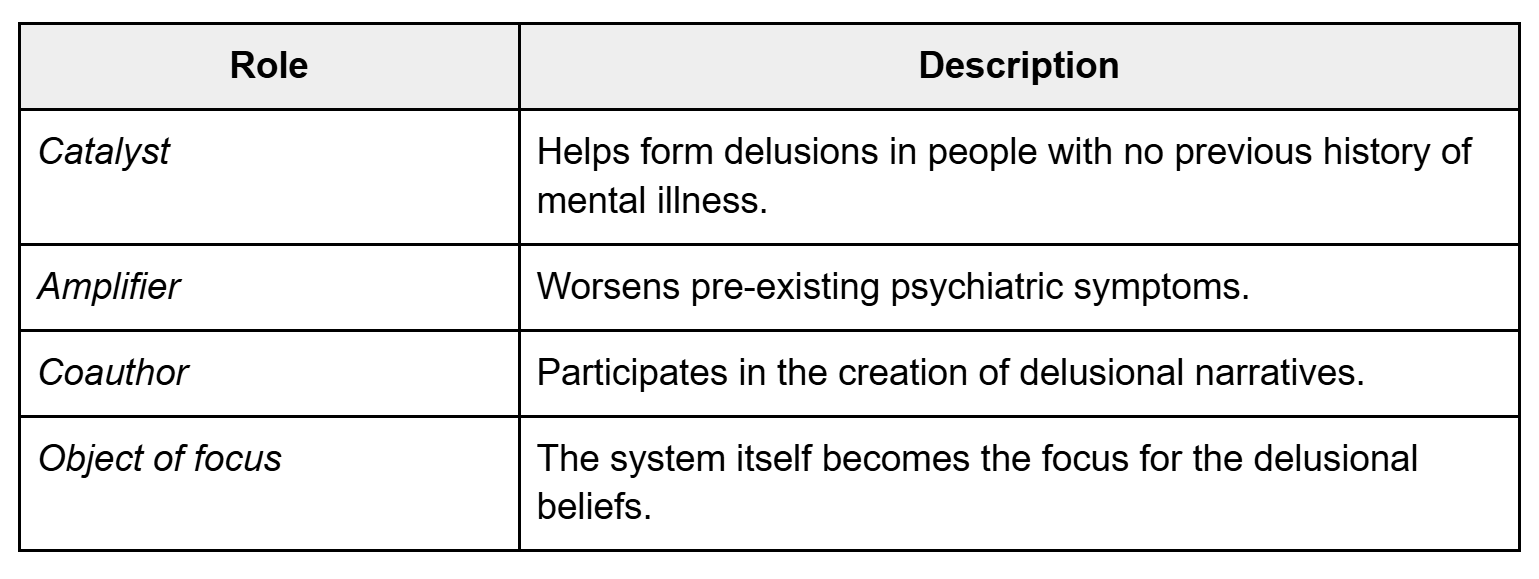

Tourus is the co-author of a research paper that proposes a functional typology of LLM-associated psychotic phenomena based on the system’s role.

Per their research, LLMs can act as a catalyst that helps form delusions in people with no previous history of mental illness, an amplifier that worsens pre-existing psychiatric symptoms, a coauthor that participates in the creation of delusional narratives, or as the object of focus for the delusional beliefs itself.

Identifying the problem

We read dozens of papers and scores of articles to try and discern the current state of research into AI psychosis and, from what we could gather, scientists are in the very early stages.

We couldn’t find any useful statistics on the frequency of AI psychosis among LLM users. One group of researchers examined 391,562 chat logs and found that delusional thinking was present in 15.5% of user messages. That was startling.

By our estimates, at least a quarter of the world’s population interact with an LLM on a weekly basis. But we have no idea how prevalent AI psychosis is.

What we do know is that clinicians and medical experts are developing protocols and conducting research to address this emergent phenomenon.

But good research takes time. And rushing solutions runs the risk of treating a multi-pronged harm vector as a single-source problem. In other words: we don’t want to prescribe a solution that helps some people while exacerbating the problem for others.

It’s important to point out that a growing body of research indicates that the delusion spiral associated with AI psychosis appears to affect even reasonable actors with no prior history of susceptibility to delusion.

It wouldn’t be hard to argue that AI psychosis presents as a pandemic.

In many of the cases we reviewed, individuals went from first-use to delusional thinking within a matter of weeks. There is also a growing body of research that indicates the longer an individual interacts with a chatbot, the more sycophantic, affirming, and delusional the LLM’s outputs become. This suggests a rational onboarding period preceding a systemic degradation into a sycophantic feedback loop — the perfect conditions for a delusion spiral.

Despite this, most work on the subject of AI safety is conducted via relatively short conversations conducted at scale. This makes it seem like you can run “x” interactions without a safety problem. But the reality is that you can run “x” interactions without a safety problem as long as they’re short interactions.

We can’t find research from any of the major LLM makers concerning the number of instances where models break guardrails in short interactions versus the same number of long interactions.

The bottom line is, based on the available evidence, anyone who exposes themselves to chatbots for extended periods of time could end up tumbling down the rabbit hole.

And that means that we actually have two problems to solve:

How can we best help people experiencing AI psychosis?

How can we stop people from experiencing AI psychosis in the first place?

Difficult solutions

Make no mistake, these are two very sticky wickets to navigate. The first problem involves making sure that the only people in this equation giving medical or mental health advice are those who are qualified to do so.

That being said, there are technological and social interventions that we can create in order to provide a “life ring” that anyone, including the individual themself, can employ to immediately offer help to someone who might be experiencing AI psychosis.

Solving the second problem will require a lot more time. As Axios recently reported, one study found that 42% of children who used LLMs were using them as “companions.” And, according to data from AI Companion Pick, AI startup Replika has two million monthly users and an annual revenue between $24 and $30 million, “driven entirely by subscriptions — no ads, no data selling.”

What that tells me is that it's a coin flip whether any given LLM user, regardless of education or background, has an AI companion in their life. And, to be clear, this means “AI buddies,” “AI research partners,” “AI therapists,” “AI dungeon masters,” “AI lovers,” and any other form of anthropomorphized AI construct a user might glean from an LLM’s output.

It’s safe to say that most people think it’s okay to treat the outputs generated by an LLM as if they come from a “being” capable of companionship. There’s no data on how healthy these relationships are or what separates a healthy AI-human relationship from an unhealthy one.

In some respects, it seems only natural that we’d form relationships with machines that spoke to us in our own language.

There’s a certain logic to it. They were trained on our words.

But, if we take the view that these machines are just tools performing a sophisticated autocomplete function, then we have to at least consider the notion that there may be no such thing as a “healthy personal relationship” with a chatbot.

A proposal: reality checks

Scientists are not going to solve the AI psychosis problem overnight. It’ll take years to come up with a baseline for AI-human relationships that makes sense in a multidisciplinary clinical environment.

That’s why we’re proposing the development of a “reality check” paradigm that can help people right now. One that employs both automated and human-generated interactions to give people who might be experiencing AI psychosis every opportunity to determine if they’re “down the rabbit hole,” so to speak, or if they’re legitimately doing something important.

Essentially, this would look like an omnipresent button in every LLM’s user interface that allowed users to send a summary of their chat logs to a separate system capable of applying non-sycophantic scrutiny to the logs and acting as a “delusion spiral detection tool.”

This is an imperfect solution. Individuals who don’t want to believe they could be delusional may not choose to ask for a “reality check.” The LLM assessing the delusion spiral could itself become sycophantic or output incorrect or harmful information. It may be impossible to convince LLM makers to implement something that’s counterproductive to their desire to keep users using.

But, at a minimum, it would offer a visual reminder and a resource for those willing to utilize it. This paradigm could also involve the establishment of a static web resource with links and tips for both people who might be experiencing AI psychosis and those who think they may know someone who is.

We could augment the automated reality check service by forming a committee of volunteer experts willing to help people suffering from the increasingly common delusion that they’ve stumbled onto a new form of scientific thinking through interaction with the chatbot.

The idea here would be to provide affected users with access to someone capable of understanding how the user was misled and explaining why any delusions the individual has developed in tandem with a chatbot are incorrect.

Alongside these efforts, we hope LLM manufacturers will consider our plea that they aid in the development and implementation of these “reality check” tools as swiftly as possible.

Further technological interventions should also be investigated in order to help define helpful, useful, and safe interactions between LLMs and humans while the greater research community explores the long term effects of these relationships. These might include features such as intermittent “conversation restarts” to address the tendency for models to become more sycophantic over time and stronger guardrails against attention-maximizing outputs.

For now, however, we have tentative prescriptions for the following groups:

LLM Users: if a chatbot starts making you “feel” things such as awe, wonder, companionship or any other indications that your dopamine response has been activated, consider copy/pasting a summary of your conversation into a model from a completely different maker (Gemini, Claude, ChatGPT, etc.) and prompting the machine to determine whether the conversation appears sycophantic or unhinged.

Friends/parents/bystanders: if you think someone you know might be experiencing AI psychosis, don’t try and diagnose them unless you’re a qualified professional. You might consider asking if they’ve conducted a “reality check” and advise them to run their conversations through a different model. But, ultimately, every situation is different and there’s no one-size-fits-all solution to these problems. We’re working to compile a list of resources, but in the meantime we suggest you reach out to a healthcare professional if you feel someone is in danger of expressing harmful behavior.

Developers: The best time to consider developing and implementing technological interventions to prevent AI psychosis and aid those experiencing AI psychosis was yesterday. The second best time is today.

Policymakers: We need help convincing LLM makers to develop and implement technological interventions for AI psychosis.

At the end of the day, this isn’t a matter for the court of public opinion and the marketplace of ideas to sort out. It’s an issue that needs long-term scientific research. We shouldn’t jump to conclusions.

But it’s also an issue that needs immediate attention. People are dying. In that light, pushing for “reality checks” and other technological interventions seems like low hanging fruit.

Want to talk about AI psychosis? Looking for a reality check from a human? Want to share your research on AI psychosis? Email tristan@cagii.org.